Open Password – Montag,

den 17. Februar 2020

# 707

Outsell – AI – Regulation – Hugh Logue – US Government – White House – New York – California – San Francisco – London – Clearview – Ron Wyden – FTC – Algorithmic Accountability Act – Centre for the Governance of AI – EU – GDPR – European Commission – Trustworthy AI – OECD – Recommendation on Artificial Intelligence – Stephen Schwarzman – University of Oxford – MIT – US Election – BSI – Compliance Solution Market – Journal of Documentation – Open Password – T. Gorichanaz – Luciano Floridi – Hans-Christoph Hobohm – Buch-Symposium-Format – Logic of Information – Europäische Union – Ursula von der Leyen – Künstliche Intelligenz – DSGVO – Exasol – Datenteams

Outsell´s Contribution for February

New AI Regulations on the Horizon

Making Artificial Intelligence Ethical,

Transparent and Accountable

By Hugh Logue, Director & Lead Analyst – Email: hlogue@outsellinc.com

The debate around the regulation and governance of AI is set to intensify for the information, data, and software sector in 2020.

___________________________________________________________________

Important Details

___________________________________________________________________

The US Government recently released a set of principles to regulate the future development of artificial intelligence in the United States. The White House is taking a laissez-faire stance on AI development, which encourages agencies to provide “fairness, non-discrimination, openness, transparency, safety, and security” in all aspects of software development.

The White House’s set of principles comes after New York passed a new law in November prohibiting consumer reporting agencies and lenders from using data from consumers’ social media networks in their algorithms to determine creditworthiness. California also introduced legislation in July 2019 requiring that companies disclose when a person is communicating with an automated bot either online or by telephone. The potential to abuse facial recognition technology also led San Francisco to become the first US city to ban the use of this technology. This contrasts with London: the Metropolitan Police announced this month that it will use live facial recognition cameras across the city’s streets.

A recent article in The New York Times exposed the practices of a little known startup, Clearview, which enables law enforcement agencies to cross-reference images of people’s faces with a database of three billion online photos, including ones scraped from Facebook, YouTube, and Twitter, with links back to the sites the images came from. The company claims that its tool finds matches up to 75% of the time. In response, New Jersey’s attorney general requested that local police stop using Clearview, and Twitter sent the company a cease-and-desist letter for violating its policies.

In contrast to the White House’s laissez-faire approach to AI regulation, there are now calls for a more tightly regulated approach in the US. A bipartisan group in Congress is working on a proposal for a bill that would regulate the use of facial recognition in the private sector.

There are also other proposals, such as Senator Ron Wyden’s bill for an “Algorithmic Accountability Act” that would require “automated decision systems” to demonstrate that they are free of race, gender, and other biases before they are deployed. The act would ask the Federal Trade Commission to create rules requiring companies to regularly audit their machine-learning systems for accuracy, fairness, bias, and discrimination, subject to certain exceptions. While this bill is highly unlikely to ever be enacted, the approach may be picked up and modified by others, especially in an election year at a time when public opinion is increasingly mistrustful of AI. A survey conducted in 2018 by the Centre for the Governance of AI found that 84% of people in the US believe that AI should be carefully managed.

There is also growing pressure for increased regulation of AI in other countries as the technology starts to control important aspects of people’s lives, such as medical diagnoses, loan applications, and recruitment decisions. In the EU, the GDPR already prohibits some unethical excesses of AI, such as automated decision-making, unless safeguards are in place. However, the EU plans to go further: in January, the European Commission announced that it is considering a ban on the use of facial recognition in public areas for up to five years, with exceptions for security and research projects.

In April 2019, the European Commission published guidelines for “Trustworthy AI” that provide principles for AI developers to follow, including clear ethical principles and a checklist to use when developing AI systems. Beyond the US and the EU, in May 2019, the OECD member nations and six non-member nations (Argentina, Brazil, Colombia, Costa Rica, Peru, and Romania) endorsed its non-binding Recommendation on Artificial Intelligence, the first intergovernmental standard on AI. Among other things, it calls for ethical regulatory frameworks for the development and application of AI.

Academic institutes are also researching the regulation of AI. In 2019, Stephen Schwarzman, the CEO of the private equity firm Blackstone, donated £150 million to the University of Oxford to study the ethics of AI, which followed a similar donation of $350 million he made to the Massachusetts Institute of Technology. The AI Now Institute at New York University is calling for a private sector ban on the use of AI micro-emotion software that can detect changes in people’s faces, voices, or body language that may indicate if a person is lying, such as in a job interview, loan application, or insurance claim.

___________________________________________________________________

Why This Matters

___________________________________________________________________

The battle about how to regulate AI will intensify in 2020, with different camps forming around yet another divisive issue. Whether the light touch or highly regulated approach prevails will have a huge impact on data, information, and analytics businesses. It comes at a time when companies in our sector are investing heavily in new AI solutions that enable their customers to make better forecasts, anticipate market behaviour, and better understand consumer needs. Regulation of new technologies with unknown future applications always has unforeseen consequences, but providers need to avoid straying into clearly ethically questionable territories if they want to avoid disruption by regulation.

Those providers that are planning to develop new AI solutions in areas that could prove ethically controversial, such as prescriptive decision-making tools, might consider holding back large investments until they have more regulatory certainty after the US election in November. Providers need to adopt best practices in the design of their tools now so that they are transparent and enable people who are the subject of AI processes to be informed, correct any errors, or withdraw their consent. Companies across the economy also need to collaborate to adopt standards now that could be incorporated into any future regulations or could result in a voluntary self-regulation model. For example, BSI, the global business standards company, developed industry standards in 2016 for AI systems to make them ethical, transparent, and accountable.

In all this, there will be opportunities to develop compliance tools, training, and information solutions. A new AI regulatory change and compliance solutions market will emerge as organizations struggle to keep up with the changes. Customers’ uncertainty around the regulations will be compounded by confusion over the various AI technologies and cross-border activities. While some information, data, and analytics providers may only see the inconvenience of new AI regulations to their own internal processes, they are missing the opportunity of developing and selling solutions that help their customers deal with such difficulties.

Journal of Documentation

Kritische Auseinandersetzung

um Floridis Ansatz

im Buch-Symposium-Format

Zum Ansatz von Open Password gehört es, eine Plattform für kritische Auseinandersetzungen zu relevanten und aktuellen Themen verfügbar zu machen. Ein Beispiel, wo dies zumindest ansatzweise gelungen ist, stellt die „Zukunft der Informationswissenschaft – Hat die Informationswissenschaft eine Zukunft?“ dar (mittlerweile auch als Buch verfügbar). Auf ein weiteres Beispiel machen Gorichanaz, T., J. Furner u. L. Ma u.a. aufmerksam: „Information and design: book symposium on Luciano Floridi’s The Logic of Information. In: Journal of documentation. 76(2020) no.2, S.586-616. [https://doi.org/10.1108/JD-10-2019-0200]“. Das Werk von Floridi wurde in Open Password von Hans-Christoph Hobohm vorgestellt.

Die Absicht von Gorichanaz und anderen ist es „to review and discuss Luciano Floridi’s 2019 book The Logic of Information: A Theory of Philosophy as Conceptual Design, the latest instalment in his philosophy of information (PI) tetralogy, particularly with respect to its implications for library and information studies (LIS). … Nine scholars with research interests in philosophy and LIS read and responded to the book, raising critical and heuristic questions in the spirit of scholarly dialogue. Floridi responded to these questions. … Floridi’s PI, including this latest publication, is of interest to LIS scholars, and much insight can be gained by exploring this connection. It seems also that LIS has the potential to contribute to PI’s further development in some respects. … Floridi’s PI work is technical philosophy for which many LIS scholars do not have the training or patience to engage with, yet doing so is rewarding. This suggests a role for translational work between philosophy and LIS. … The book symposium format, not yet seen in LIS, provides forum for sustained, multifaceted and generative dialogue around ideas.“

Europäische Union

Regulierung Künstlicher Intelligenz

nach dem Vorbild der DSGVO

Ursula von der Leyen, the new president of the European Commission,has pledged to introduce GDPR-style legislation to regulate artificial intelligence (AI).“With the GDPR, we set the pattern for the world. We have to do the same with artificial intelligence.“It is not about damming up the flow of data. It is about making rules that define how to handle data responsibly. For us the protection of a person’s digital identity is the overriding priority.”

Leyen also vowed to explore new ways in which big data can be used to create wealth for business and societies. She said that she would make an effort to prioritise investment in AI, through the Multiannual Financial Framework as well as with the increased use of public-private partnerships. On the subject of technological sovereignty, von der Leyen urged people and organisations to pool resources, money, research capacity and knowledge and to put that into practice

Quelle: BIIA

Exasol

Zwei von drei Datenteams finden

über KI-Anwendungen

keine relevanten Ergebnisse

Exasol AG, Hersteller einer In-Memory-Analytics-Datenbank, hat aus einer Umfrage unter 2.000 Entscheidern in Deutschland, UK, USA und China gefolgert, dass 68 Prozent aller Daten-Teams nicht in der Lage sind, die Erkenntnisse aus ihrem Datenbestand zu ziehen, die ihr Unternehmen für eine bessere Entscheidungsfindung braucht.

Infolgedessen können Unternehmen nicht datengetrieben agieren. Darüber hinaus gaben 80 Prozent aller Datenverantwortlichen an, dass deren derzeitige IT-Infrastruktur die Datendemokratisierung erschwert. Mehr als 80 Prozent der befragten Unternehmen berichten über Performance-Probleme, wenn sich Daten in verschiedenen Umgebungen befinden.

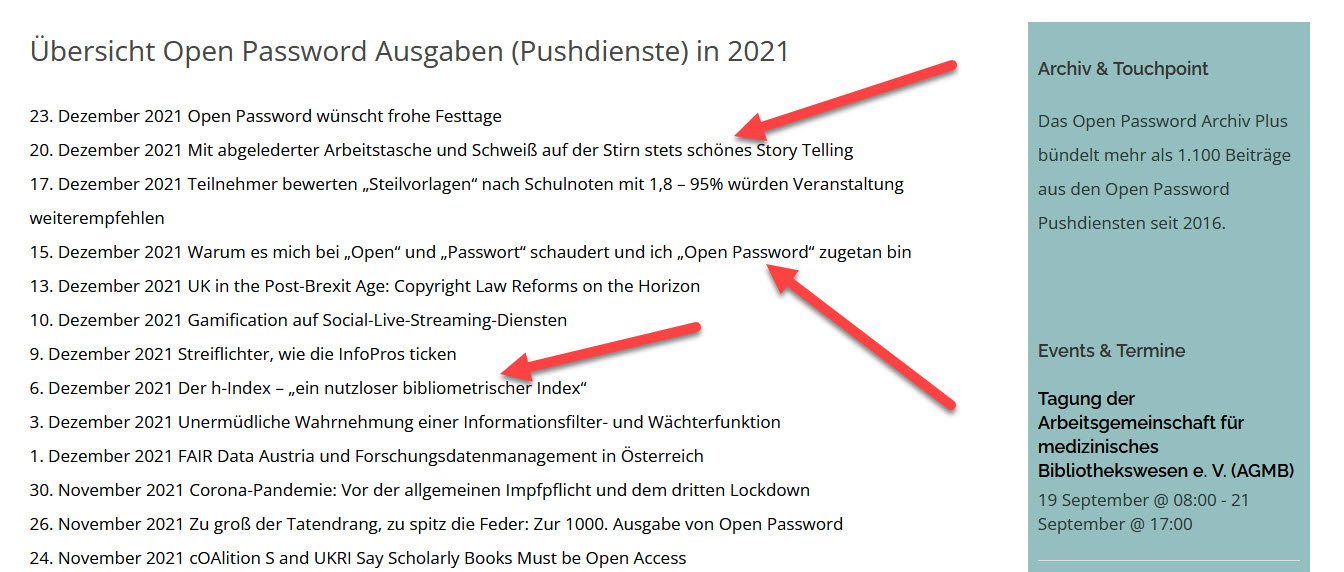

Open Password

Forum und Nachrichten

für die Informationsbranche

im deutschsprachigen Raum

Neue Ausgaben von Open Password erscheinen viermal in der Woche.

Wer den E-Mai-Service kostenfrei abonnieren möchte – bitte unter www.password-online.de eintragen.

Die aktuelle Ausgabe von Open Password ist unmittelbar nach ihrem Erscheinen im Web abzurufen. www.password-online.de/archiv. Das gilt auch für alle früher erschienenen Ausgaben.

International Co-operation Partner:

Outsell (London)

Business Industry Information Association/BIIA (Hongkong)

Anzeige

FAQ + Hilfe